Two Perspectives of Why Column Rank Equals Row Rank and Their Connections

Frankly speaking, there’s no need to write a blog explaining this corollary since it has been taught on undergraduate classes for decades. But when I was reviewing my Algebra book, I found myself pondering a deeper question: what is the intrinsic connection between these two perspectives? At least I need an explanation other than Mathematic proof.

Review: Fundamental Theorem of Linear Maps #

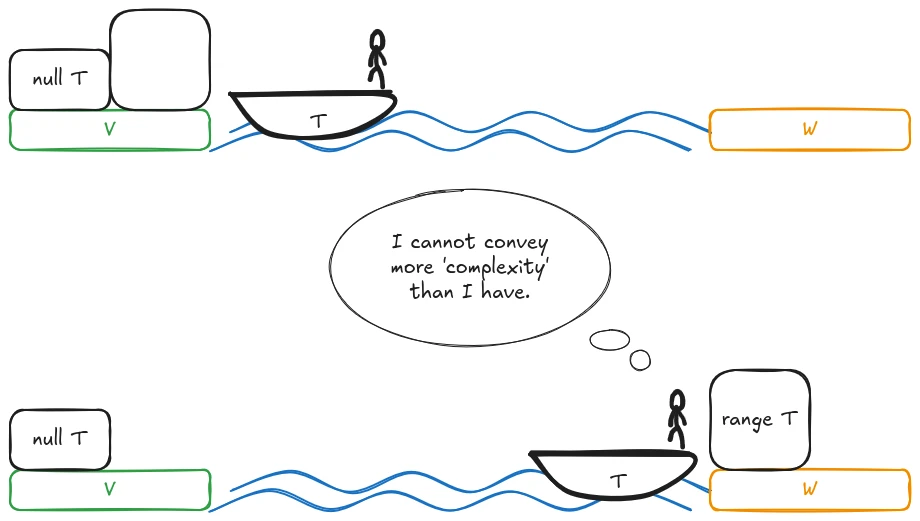

Matrices are essentially representations of linear maps. I’ll begin by recalling the fundamental theorem of linear maps.

$$ \dim{V} = \dim{\operatorname{range} T} + \dim{\operatorname{null} T} $$This result is known as the rank-nullity theorem. A more general result appears in group theory:

$$ |G| = |\ker{f}| \cdot |\operatorname{im}f| $$

Perpendicular Subspaces #

Consider an $m \times n$ matrix $A$ over a field $F$. We may interpret $A$ as representing two distinct linear maps:

- $T: F^n \to F^m$ defined by $T(x) = Ax$ (where $x$ is a column vector)

- $T': F^m \to F^n$ defined by $T'(y) = y^T A$ (where $y$ is a column vector, making $y^T$ a row vector)

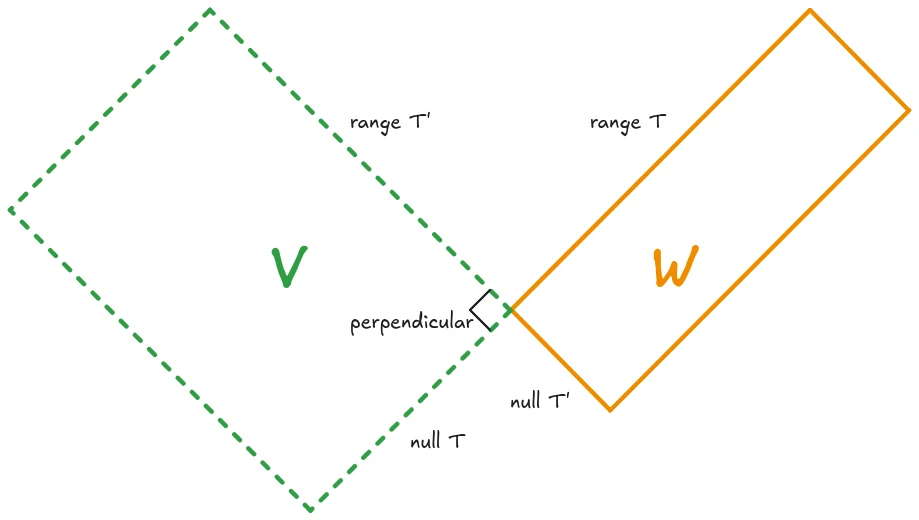

If we interpret matrix multiplication as linear combination, the column space of $A$ is precisely $\operatorname{range}T$, while the row space of $A$ is $\operatorname{range}T'$. Thus, the column rank equals $\dim{\operatorname{range}T}$ and the row rank equals $\dim{\operatorname{range}T'}$.

If we interpret matrix multiplication as an equation group, each entry of $Ax$ is the inner product of $x$ with the corresponding row of $A$. Consequently, for any $x \in \operatorname{null}T$ and any linear combination $r$ of the rows of $A$, we have $r \cdot x = 0$. This establishes that $\operatorname{null}T \perp \operatorname{range}T'$, or equivalently, $\operatorname{range}T' \subseteq (\operatorname{null}T)^\perp$.

From this inclusion, we obtain the dimension inequality:

$$\dim{\operatorname{range}T'} \leq \dim{(\operatorname{null}T)^\perp} = \dim{F^n} - \dim{\operatorname{null}T}$$Applying the rank-nullity theorem yields:

$$\dim{\operatorname{range}T'} \leq \dim{\operatorname{range}T}$$By applying the same argument to $T'$, we conclude $\dim{\operatorname{range}T} \leq \dim{\operatorname{range}T'}$, hence $\dim{\operatorname{range}T} = \dim{\operatorname{range}T'}$.

Transpose #

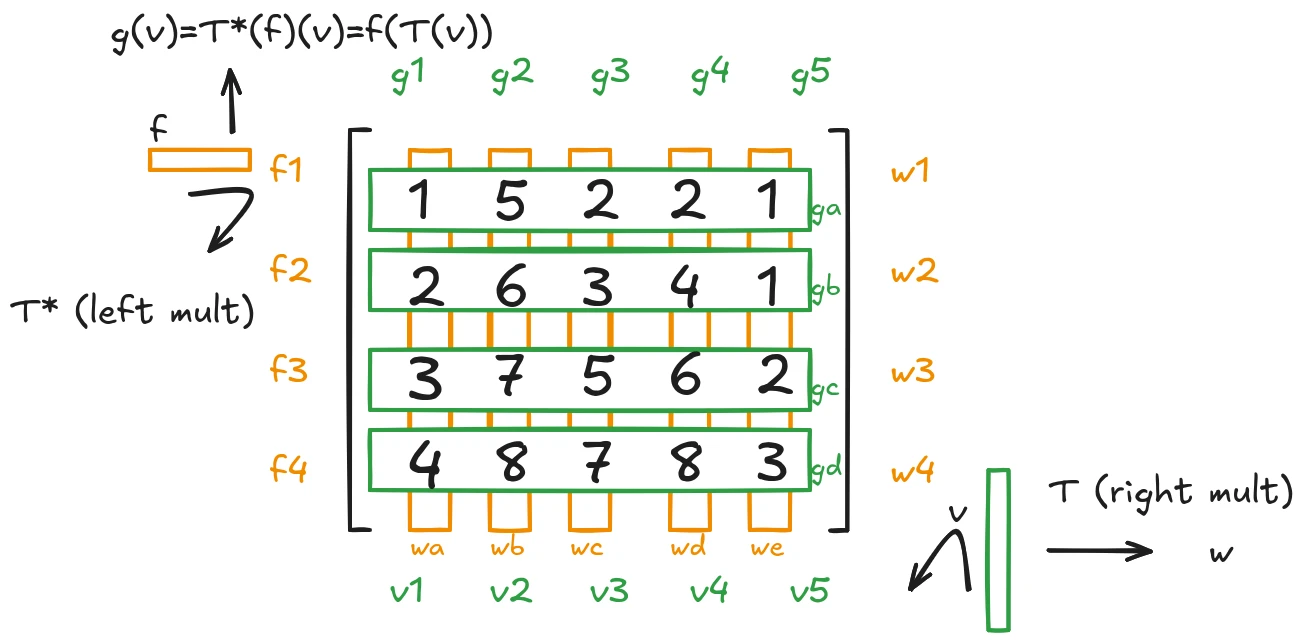

Given a linear map $T: V \to W$, recall that its adjoint (or dual map) $T^* : W^* \to V^*$ is defined by:

$$T^*(f)(v) = f(T(v))$$for all $f \in W^*$ and $v \in V$.

For $v \in \operatorname{null}T$ and $g \in \operatorname{range}T^*$, there exists $f \in W^*$ such that $g = T^*(f)$. Then:

$$g(v) = T^*(f)(v) = f(T(v)) = f(\mathbf{0}) = 0$$This demonstrates that $\operatorname{range}T^* \subseteq (\operatorname{null}T)^0$, where $(\operatorname{null}T)^0 = \{g \in V^* : g(v) = 0, \forall v \in \operatorname{null}T\}$ denotes the annihilator of $\operatorname{null}T$.

Consequently:

$$\dim{\operatorname{range}T^*} \leq \dim{(\operatorname{null}T)^0} = \dim{V} - \dim{\operatorname{null}T} = \dim{\operatorname{range}T}$$To establish the reverse inequality, we examine the matrix representation. Let $\{v_1, \ldots, v_n\}$ and $\{w_1, \ldots, w_m\}$ be bases of $V$ and $W$ respectively, with dual bases $\{g_1, \ldots, g_n | g_j(v_k) = \left\{ \begin{array}{rcl}1, \space k=j \\ 0, \space k \neq j\end{array} \right. \}$ and $\{f_1, \ldots, f_m | f_i(w_k) = \left\{ \begin{array}{rcl}1, \space k=i \\ 0, \space k \neq i\end{array} \right.\}$. If $T$ has the matrix $A = (a_{ij})$, $T^*$ has the matrix $A^*=(a^*_{ij})$, then for the dual map:

$$ a^*_{ji}=\left(\sum_{k=1}^{n}a^*_{ki}g_k\right)(v_j)=T^*(f_i)(v_j) = f_i(T(v_j)) = f_i\left(\sum_{k=1}^{m} a_{kj}w_k\right) = a_{ij} $$Now we know that the matrix of $T^*$ with respect to the dual bases is perfectly $A^T$. Therefore the inequality essentially means that row rank of $A$ is not greater than column rank of $A$.

Then applying our rank inequality to $A^T$ immediately yields that column rank of $A$ is not greater than row rank of $A$, hence row rank equals column rank.

The Connection #

The latter halves of both proofs look almost the same. The connection lies behind the fact that inner product inherently defines a linear functional. Specifically, for any fixed $v_0 \in V$, $g_{v_0}: V \to F$ defined by $g_{v_0}(v) = v \cdot v_0$ is linear.

From the previous section we can know that $T^*$ can be identified with $T'$ under the identification of $W$ with $W^*$ and the identification of $V$ with $V^*$ via dual bases, or equivalently, right multiplication by $A^T$ in the appropriate convention.

In the first proof, we showed that $\operatorname{null}T \perp \operatorname{range}T'$ via inner products. In the second proof, we showed that $\operatorname{range}T^*$ annihilates $\operatorname{null}T$ ($g(x) = 0$ if $x \in \operatorname{null}T$ and $g \in \operatorname{range}T^*$).

When we identify each row $r$ of $A$ with the linear functional $g_r(x) = r \cdot x$, it’s obvious that the first proof is merely a concrete realization of the second.